AI Is Expensive—But It Doesn’t Have to Be

AI adoption is accelerating—but so are infrastructure costs.

Many organizations jumped into cloud-based AI assuming flexibility would outweigh costs. Instead, they’re now facing unpredictable billing, expensive GPU usage, and long-term cost inefficiencies.

The reality?

AI is no longer a cloud-only game. On-prem infrastructure is making a strong comeback—smarter, faster, and far more cost-efficient.

At Minnesota Computers, businesses are rethinking how they build AI environments—focusing on performance, scalability, and cost control without unnecessary spend.

Why Cloud-Only AI Strategies Are Falling Short

Cloud platforms offer convenience, but they come with trade-offs—especially for AI workloads.

AI applications such as large language model (LLM) inference and machine learning training require high-performance GPUs, massive memory bandwidth, and continuous compute usage. In the cloud, this translates into:

- High hourly GPU costs

- Data transfer and storage fees

- Long-term vendor lock-in

- Limited cost predictability

Over time, these costs compound—making cloud-first strategies unsustainable for many mid-sized and enterprise businesses.

This is why IT leaders are now asking a smarter question:

What workloads should stay in the cloud—and what should move on-prem?

The Shift Back to On-Prem AI Infrastructure

On-premise infrastructure is no longer outdated—it has evolved.

Modern on-prem AI environments offer:

- High-performance GPU servers

- Scalable storage solutions

- Low-latency processing

- Full cost control

More importantly, they eliminate recurring compute costs, allowing businesses to turn capital investment into long-term ROI.

With the right setup, on-prem infrastructure can handle:

- Model inference at scale

- Internal AI applications

- Data-sensitive workloads

- Hybrid cloud integrations

This makes it a powerful alternative—or complement—to cloud-based AI.

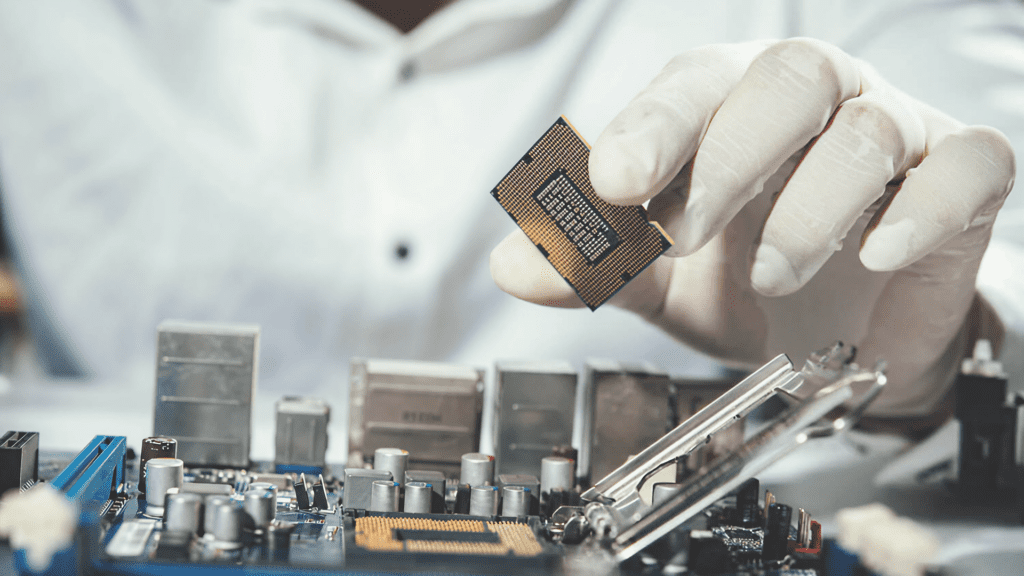

The Hardware That Actually Matters for AI Workloads

When building AI-ready infrastructure, not all hardware decisions carry equal weight. Overspending on the wrong components is one of the most common mistakes.

GPUs: The Core of AI Performance

Graphics Processing Units (GPUs) are the backbone of AI workloads. Whether running inference or training models, GPU performance directly impacts speed and efficiency.

However, businesses don’t always need the latest, most expensive GPUs. Many enterprise-grade, slightly older models still deliver exceptional performance at a fraction of the cost.

Memory (RAM): Enabling Large Models

AI workloads require substantial memory, especially for handling large datasets and models. Insufficient RAM leads to bottlenecks, slowing down processing and limiting scalability.

A balanced configuration ensures smooth performance without unnecessary overspending.

Storage: Speed + Capacity

AI systems rely on fast data access. NVMe SSDs and high-throughput storage solutions are essential for maintaining performance, particularly during training and real-time inference.

CPU & Networking: Supporting the Ecosystem

While GPUs handle most computations, CPUs and networking infrastructure ensure smooth coordination across systems. High-speed interconnects and efficient processing pipelines are critical for overall system performance.

Why Refurbished GPU Servers Are a Strategic Advantage

One of the biggest misconceptions in IT procurement is that “new equals better.”

In reality, certified refurbished servers deliver enterprise-grade performance at significantly lower costs. (Invicta Pcs)

Refurbished infrastructure offers:

Significant Cost Savings

Businesses can save 30–50% or more compared to new hardware—freeing up budget for scaling AI initiatives. (Invicta Pcs)

Proven Reliability

Enterprise hardware is designed for longevity. When properly tested and certified, refurbished systems perform at the same level as new ones.

Faster Deployment

Unlike new hardware, which may have long lead times, refurbished systems are often readily available—allowing faster implementation.

Sustainability Benefits

Extending hardware lifecycle reduces e-waste and supports environmentally responsible IT practices. At Minnesota Computers, businesses gain access to tested, certified, and cost-efficient infrastructure designed to meet modern AI demands.

A Phased Approach to Building AI Infrastructure

Instead of large upfront investments, a phased approach allows businesses to scale intelligently.

Phase 1: Start with Inference Workloads

Begin by deploying infrastructure for AI inference—customer-facing applications, automation tools, or internal analytics. This provides immediate ROI with manageable investment.

Phase 2: Optimize and Expand

Analyze performance, identify bottlenecks, and expand GPU capacity or storage as needed. This ensures every upgrade is driven by actual demand—not assumptions.

Phase 3: Introduce Training Capabilities

Once infrastructure is stable, businesses can move into model training or fine-tuning. This step requires additional compute power but unlocks greater control and customization.

Phase 4: Hybrid Integration

Combine on-prem infrastructure with cloud resources for peak workloads. This hybrid model provides flexibility without sacrificing cost efficiency.

Total Cost of Ownership: The Metric That Actually Matters

The biggest mistake organizations make is comparing upfront costs instead of total cost of ownership (TCO).

Cloud may seem cheaper initially—but over time:

- Monthly compute costs accumulate

- Data transfer fees increase

- Scaling becomes expensive

On-prem infrastructure, on the other hand:

- Requires upfront investment

- But delivers predictable, long-term cost savings

- Improves ROI over time

When evaluated over 2–3 years, on-prem AI infrastructure often proves to be significantly more cost-effective.

How Minnesota Computers Helps You Build Smarter Infrastructure

Minnesota Computers specializes in helping businesses maximize performance without overspending.

With expertise in:

- Enterprise servers and GPU systems

- Refurbished and new hardware solutions

- Scalable infrastructure planning

- Cost-optimized IT procurement

They enable organizations to build AI-ready environments that are:

- High-performance

- Budget-conscious

- Scalable for future growth

Their approach is simple: Build infrastructure based on real needs—not inflated assumptions.

Smarter AI Starts with Smarter Infrastructure

AI success is no longer just about algorithms—it’s about infrastructure strategy. Businesses that rely solely on cloud will continue to face rising costs. Those that adopt a balanced, on-prem-first or hybrid approach will gain:

- Better cost control

- Higher performance

- Long-term scalability

With the right hardware strategy—and the right partner like Minnesota Computers—AI becomes not just accessible, but sustainable.